Security | Threat Detection | Cyberattacks | DevSecOps | Compliance

3 Ways AI Transforms Security

Security AI usage has surged, and enterprises are reaping the benefits. In its 2022 Cost of a Data Breach Report, IBM found that organizations deploying security AI and automation incurred $3.05 million less on average in breach costs – the biggest cost saver found in the study. According to the study, organizations using security AI and automation detected and contained breaches faster. However, while leveraging AI clearly makes a difference, organizations must implement the right architecture.

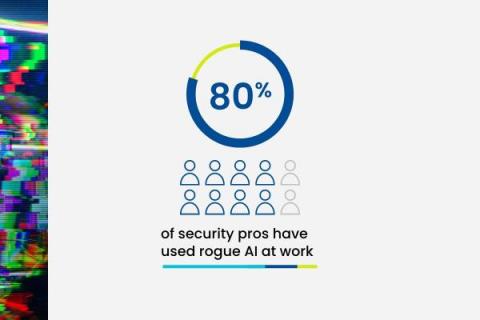

Rogue AI is Your New Insider Threat

When ChatGPT debuted in November 2022, it ushered in new points of view and sentiments around AI adoption. Workers from nearly every industry started to reimagine how they could accomplish daily tasks and execute their work — and the cybersecurity industry was no exception. Like shadow IT, new rogue AI tools — meaning AI tools that employees are adopting unbeknownst to the organization they work for — can pose security risks to your organization.

How Snyk uses AI in developer security

As a leader in applying AI to developer security, Snyk’s approach is unique. Today, we want to provide a glimpse at how Snyk currently uses AI and data science, as well as a sneak peek at what’s to come. Before diving in, we want to highlight two aspects of Snyk’s use of AI to set the stage.

Italy Bans ChatGPT: A Portent of the Future, Balancing the Pros and Cons

In a groundbreaking move, Italy has imposed a ban on the widely popular AI tool ChatGPT. This decision comes in the wake of concerns over possible misinformation, biases and the ethical challenges AI-powered technology presents. The ban has sparked a global conversation, with many speculating whether other countries will follow suit.

Social Engineering Attacks Utilizing Generative AI Increase by 135%

New insights from cybersecurity artificial intelligence (AI) company Darktrace shows a 135% increase in novel social engineering attacks from Generative AI. This new form of social engineering that contributed to this increase is much more sophisticated in nature, using linguistics techniques with increased text volume, punctuation, and sentence length to trick their victim. We've recently covered ChatGPT scams and other various AI scams, but this attack proves to be very different.

Fake ChatGPT Scam Turns into a Fraudulent Money-Making Scheme

Using the lure of ChatGPT’s AI as a means to find new ways to make money, scammers trick victims using a phishing-turned-vishing attack that eventually takes victim’s money. It’s probably safe to guess that anyone reading this article has either played with ChatGPT directly or has seen examples of its use on social media. The idea of being able to ask simple questions and get world-class expert answers in just about any area of knowledge is staggering.

The New Face of Fraud: FTC Sheds Light on AI-Enhanced Family Emergency Scams

The Federal Trade Commission is alerting consumers about a next-level, more sophisticated family emergency scam that uses AI that imitates the voice of a "family member in distress". They started out with: "You get a call. There's a panicked voice on the line. It's your grandson. He says he's in deep trouble — he wrecked the car and landed in jail. But you can help by sending money. You take a deep breath and think. You've heard about grandparent scams. But darn, it sounds just like him.

What Generative AI Means For Cybersecurity: Risk & Reward

Artificial Intelligence Makes Phishing Text More Plausible

Cybersecurity experts continue to warn that advanced chatbots like ChatGPT are making it easier for cybercriminals to craft phishing emails with pristine spelling and grammar, the Guardian reports. Corey Thomas, CEO of Rapid7, stated, “Every hacker can now use AI that deals with all misspellings and poor grammar. The idea that you can rely on looking for bad grammar or spelling in order to spot a phishing attack is no longer the case.